はじめに

Xの投稿に流れてきた MatX という会社。

Ex-Google chip designers have launched startup MatX to develop AI chips specifically for LLMs, Bloomberg reports. “Inside of Google, there were lots of people who wanted changes to the chips for all sorts of things, and it was difficult to focus just on LLMs. We chose to leave…

— Dan Nystedt (@dnystedt) 2024年3月30日

今日は、その、MatX について、調べてみました。

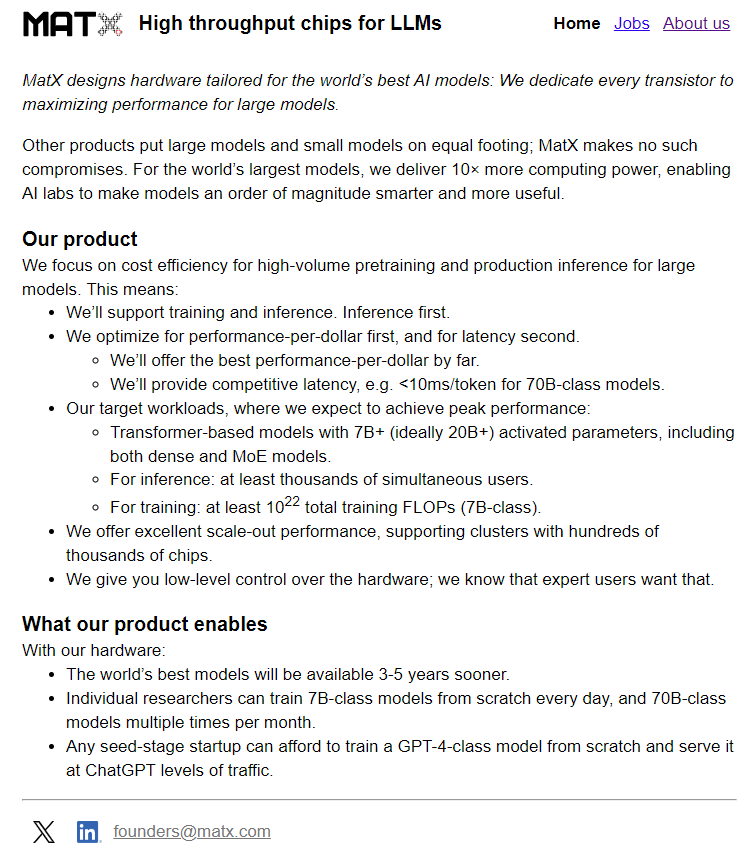

MatX High throughput chips for LLMs

上記のサイトにアクセスすると、次のようなことが書いています。説明のために引用します。

MatX designs hardware tailored for the world’s best AI models: We dedicate every transistor to maximizing performance for large models. Other products put large models and small models on equal footing; MatX makes no such compromises. For the world’s largest models, we deliver 10× more computing power, enabling AI labs to make models an order of magnitude smarter and more useful.

ここまでで

- We dedicate every transistor to maximizing performance for large models.

どこかで聞いた言葉っぽい。

Our product We focus on cost efficiency for high-volume pretraining and production inference for large models. This means:

とりあえず。Inference っぽい。

We’ll support training and inference. Inference first.

とはいっても、Training はサポートする。でも、Inference が最初

We optimize for performance-per-dollar first, and for latency second. We’ll offer the best performance-per-dollar by far.

コスパ、頑張る!

We’ll provide competitive latency, e.g. <10ms/token for 70B-class models. Our target workloads, where we expect to achieve peak performance: Transformer-based models with 7B+ (ideally 20B+) activated parameters, including both dense and MoE models.

7B, 20B, 70B ぐらいなのか?

For inference: at least thousands of simultaneous users.

Inferenceでは、少なくとも数千の同時ユーザーをサポート

For training: at least 1022 total training FLOPs (7B-class).

Training では、7B-class で 1022 total FLOPS って、どのぐらい凄いのかは、よくわからん。

We offer excellent scale-out performance, supporting clusters with hundreds of thousands of chips.

100,000 x n ぐらいのクラスタは頑張る!

We give you low-level control over the hardware; we know that expert users want that.

low-level control もどこかできいたことがある。

What our product enables With our hardware: The world’s best models will be available 3-5 years sooner. Individual researchers can train 7B-class models from scratch every day, and 70B-class models multiple times per month.

7B-class だと、毎日数回スクラッチで学習できる。70B-class だと、月に何回が Trainigできる。。。

Any seed-stage startup can afford to train a GPT-4-class model from scratch and serve it at ChatGPT levels of traffic.

GPT-4-class でもお金なくても学習できる!

消えるともったいないので、まるっと、画像としても引用します。

とにかく、お安く、Inference および Training できるもの作ること言うことっぽい。

出資

既に、$25M集めた。。。なんか、個人が多い

Founder

- Reiner Pope san (cofounder and CEO) : 2022.11

- .... (2012.4 - 2022.11)

- Mike Gunter -san (cofounder and CTO) : 2022.11

- SGI(1996.7 - 1999.9) .... (2005.10 - 2022.11)

- Avinash Mani -san (Chief Development Officer, Silicon) : 2024.1 -

Member は、ここにまとめました。

Jobs

- ASIC/SOC CAD Engineer

- ASIC/SOC Micro-Architect and RTL Design Engineer

- ASIC/SOC Silicon Design-For-Test Lead

- ASIC/SOC Silicon Physical Design Engineer

- ASIC/SOC Silicon Verification Engineer

- System Hardware Lead

お賃金 : $120,000 - $400,000 + equity + benefits

おわりに

これから開発して、いつでてくるのかしら。

The world’s best models will be available 3-5 years sooner.

と書いてあるけど、これに間に合うのかしら。。。

の筆頭に、Reiner Pope san どうなんですかね。

ACKNOWLEDGMENTSに、Mike Gunter -san も。